Anthropic to challenge DOD’s supply-chain label in court

The designation could restrict the use of Anthropic’s technology in defense-related projects and prevent contractors working with the U.S. military from using the company’s AI systems.

AI company Anthropic, known for developing the Claude AI model, is preparing to take the U.S. Department of Defense (DOD) to court after the Pentagon labeled the company a “supply-chain risk” to national security.

The designation could restrict the use of Anthropic’s technology in defense-related projects and prevent contractors working with the U.S. military from using the company’s AI systems.

Anthropic’s leadership says the decision is legally unsound and unprecedented, especially because such supply-chain risk labels are typically applied to foreign adversaries rather than American technology firms.

The case highlights growing tensions between AI companies and governments as artificial intelligence becomes increasingly important for national security and military operations.

Why the Pentagon Labeled Anthropic a Supply-Chain Risk

According to officials, the Pentagon determined that Anthropic’s systems could pose risks within the defense supply chain.

In government procurement, a supply-chain risk designation means that a technology provider might create vulnerabilities in national security infrastructure.

This classification can lead to:

- Restrictions on government contracts

- Limits on the use of the company’s technology by military contractors

- Security reviews of partnerships and infrastructure

If enforced broadly, the designation could significantly reduce Anthropic’s ability to participate in defense-related AI projects.

Anthropic’s Response to the Designation

Anthropic has strongly rejected the Pentagon’s decision and announced plans to challenge the label in federal court.

CEO Dario Amodei stated that the company received official notice confirming the designation and believes the action has no solid legal foundation.

The company argues that:

- The decision sets a dangerous precedent for American tech firms

- The label could unfairly damage partnerships and contracts

- The government’s interpretation of supply-chain risk laws may be overly broad

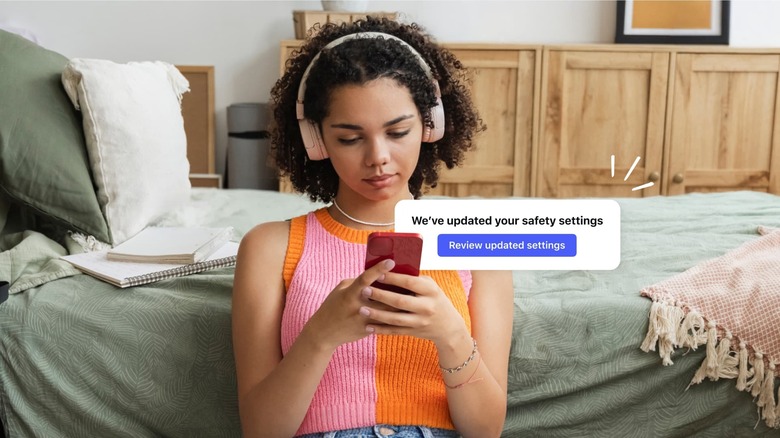

Anthropic has emphasized that the restrictions primarily affect contracts directly tied to the Defense Department, meaning most of its commercial customers remain unaffected.

The Dispute Over Military Use of AI

At the center of the dispute is how Anthropic’s AI systems can be used by the military.

Reports indicate that negotiations between the company and the Pentagon broke down over certain restrictions Anthropic wanted to maintain.

The company reportedly refused to allow its AI to be used for:

- Mass domestic surveillance

- Fully autonomous weapons systems

These limitations conflicted with the military’s position that AI systems used in defense should be available for all lawful applications.

This disagreement ultimately escalated into the current legal battle.

Potential Impact on Defense Technology Partnerships

The Pentagon’s designation could have major implications for AI companies working with government agencies.

If the restriction remains in place, contractors involved in defense projects may have to stop using Anthropic’s technology entirely.

This could affect:

- Military AI research programs

- Defense technology contractors

- Data analysis systems used in security operations

The decision may also reshape how private AI companies collaborate with government agencies in the future.

A Broader Debate About AI and National Security

The conflict between Anthropic and the Pentagon reflects a broader debate about the role of private AI companies in national defense.

Governments increasingly rely on commercial AI technology for:

- Intelligence analysis

- Cybersecurity defense

- Military logistics

- Strategic decision support

However, tensions can arise when companies impose ethical or operational limits on how their technology is used.

As AI becomes central to national security infrastructure, disputes like this may become more common.

What Happens Next

Anthropic’s legal challenge could set an important precedent for how the government classifies technology providers in defense supply chains.

If the company succeeds in court, it could limit the government’s ability to apply such labels to private firms.

If the Pentagon’s decision stands, it may encourage stricter oversight of AI companies involved in national security projects.

Either outcome will likely shape the future relationship between AI developers and military institutions.

Final Thoughts

The clash between Anthropic and the U.S. Department of Defense highlights the growing complexity of regulating artificial intelligence in national security contexts.

As AI systems become increasingly powerful and widely deployed, governments and technology companies must navigate legal, ethical, and strategic questions about how these tools should be used.

The upcoming court battle may determine not only Anthropic’s role in defense technology but also how future AI partnerships between governments and private companies are structured.

FAQ

Why did the Pentagon label Anthropic a supply-chain risk?

The Pentagon determined that the company’s AI systems could pose security risks within defense technology supply chains.

What does a supply-chain risk designation mean?

It means government agencies and contractors may be restricted from using the company’s technology in defense-related operations.

Why is Anthropic challenging the decision?

Anthropic argues the designation is legally unsound and unprecedented for a U.S. technology company.

What is Anthropic known for?

Anthropic is an artificial intelligence company that developed the Claude AI model, a major competitor to other large AI systems.

Could this affect AI companies working with the government?

Yes. The case may influence how governments regulate and partner with AI companies in national security projects.

When will the court case happen?

Anthropic has signaled its intent to challenge the decision, and legal proceedings are expected to begin once the lawsuit is formally filed.