Deepfake Attacks Are Escalating – Enterprises Must Strengthen AI Defenses

As threat actors refine their tools and tactics, enterprises must urgently rethink their AI defense strategies to safeguard sensitive data, preserve customer trust, and protect critical systems from malicious manipulation.

Deepfake technology — AI-generated synthetic media that can convincingly mimic real people’s faces, voices, and behaviors — is rapidly escalating in sophistication and frequency, placing businesses, governments, and individuals at greater risk. What once felt like futuristic dystopian fiction has now become a very real threat in areas such as corporate security, fraud prevention, political manipulation, and digital brand integrity.

As threat actors refine their tools and tactics, enterprises must urgently rethink their AI defense strategies to safeguard sensitive data, preserve customer trust, and protect critical systems from malicious manipulation.

What Are Deepfakes — And Why They Matter

Deepfakes are synthetic audio, video, or images created using generative AI models like deep neural networks and GANs (Generative Adversarial Networks). These models can create remarkably realistic media by learning patterns from real datasets.

Deepfakes matter because they can:

- Mimic executives’ voices or faces

- Forge audiovisual evidence in scams

- Influence public opinion or elections

- Impersonate customers in authentication systems

- Evade traditional fraud detection

As deepfakes become easier to produce and harder to detect, their misuse can cause financial loss, reputational damage, and societal disruption.

The Escalating Threat Landscape

In recent years, deepfake attacks have grown in scope and impact:

High-Profile Examples

- Executive impersonation in fraudulent wire transfers

- Political misinformation campaigns using fabricated speeches

- Social media hoaxes spreading coordinated disinformation

- Audio cloning to bypass voice authentication

Threat actors now include not just hobbyists but sophisticated criminal syndicates and nation-state actors with access to advanced computing resources.

Why Enterprises Are Vulnerable

Enterprises are especially vulnerable due to:

- Heavy reliance on digital communication

- Remote work cultures that trust digital identities

- Increasing automation and AI adoption across workflows

- Legacy security systems that lack deepfake detection

Traditional security defenses — firewalls, password policies, and basic biometric checks — were never designed to counter media manipulation at scale.

Attacks Are Becoming More Accessible

What once required specialized expertise is now within reach of everyday bad actors:

- Deepfake creation tools are widely available online

- Open-source generative AI models lower barriers to entry

- Pretrained voice and face synthesis models require minimal technical skill

As a result, attackers can craft convincingly realistic scams and social engineering campaigns in minutes.

Real-World Use Cases of Deepfake Exploitation

Deepfakes are being used to accomplish harmful goals:

- Business Fraud: Audio or video impersonation to authorize wire transfers

- Brand Manipulation: Fake endorsements or damaging media targeting public figures

- Credential Abuse: Breaking voice or visual biometric authentication systems

- Malware Delivery: Socially engineered deepfake messages prompting unsafe actions

- Political Influence: Synthetic media disseminated to sway public sentiment

In each case, attackers exploit trust in digital channels to achieve real-world consequences.

Strengthening AI Defenses: What Enterprises Must Do

To defend against deepfake threats, enterprises must adopt a multi-layered security strategy that blends technology, processes, and human awareness.

1. Advanced Deepfake Detection Tools

Deploy AI models trained to identify manipulated media by analyzing inconsistencies in:

- Facial micro-expressions

- Lighting and shadows

- Audio artifacts and waveform anomalies

- Metadata traces and editing fingerprints

2. Multi-Factor and Adaptive Authentication

Supplement biometric checks with additional factors such as:

- Device trust signals

- Behavioral biometrics

- Cryptographic authentication tokens

This reduces reliance on voice or face alone.

3. Threat Intelligence and Monitoring

Integrate real-time threat feeds that detect emerging deepfake campaigns circulating online and on social platforms.

4. Employee and Customer Education

Train users to recognize deepfake cues and report suspicious media. Awareness remains one of the strongest defenses.

5. Policy and Governance Controls

Establish enterprise policies that restrict how media content is verified and shared internally and externally.

Regulatory and Ethical Considerations

Governments and regulatory bodies are taking note. Proposed or emerging regulatory actions include:

- Mandating watermarking of AI-generated media

- Requiring platforms to label synthetic content

- Establishing liability standards for misuse of deepfakes

- Setting audit standards for AI systems that produce or analyze media

Enterprises must prepare for compliance with evolving legal frameworks around AI and digital content.

The Future of Deepfake Risks

As synthetic media technology improves, enterprises will face increasingly convincing and dynamic deepfake content that traditional tools can’t easily catch. Future defenses will likely rely on:

- Real-time detection engines embedded in communication platforms

- Blockchain or cryptographic provenance systems

- Federated AI models that share threat patterns without compromising data privacy

The arms race between deepfake creators and defenders will likely continue, with defense strategies needing continual updates.

Conclusion

Deepfake attacks have evolved from a niche curiosity to a major cybersecurity threat with real financial, reputational, and societal risks. Enterprises cannot afford to treat synthetic media risks as hypothetical — they must build robust AI defense strategies that combine cutting-edge technology, policy controls, and human awareness.

Proactive investment in deepfake detection and AI security infrastructure is no longer optional: it’s a core requirement for any organization that relies on digital identity, media authenticity, and trust in communications.

Frequently Asked Questions (FAQ)

What is a deepfake?

A deepfake is AI-generated or AI-manipulated media that can convincingly simulate a real person’s appearance, voice, or behavior.

Why are deepfakes dangerous?

They can be used for fraud, misinformation, brand damage, social engineering, and authentication bypass.

How can enterprises detect deepfakes?

By using advanced AI detection tools that analyze facial inconsistencies, audio artifacts, and metadata patterns.

Are there regulations around deepfakes?

Regulatory efforts are emerging, including proposals for labeling, watermarking, and legal accountability for misuse.

What should individuals do if they see a deepfake?

Report the content to platform administrators, avoid sharing it, and verify the media against trusted sources.

Will AI ever eliminate deepfakes?

Deepfakes will continue evolving, but better defenses and awareness can reduce their impact and misuse.

Mentioned in this article

Tools

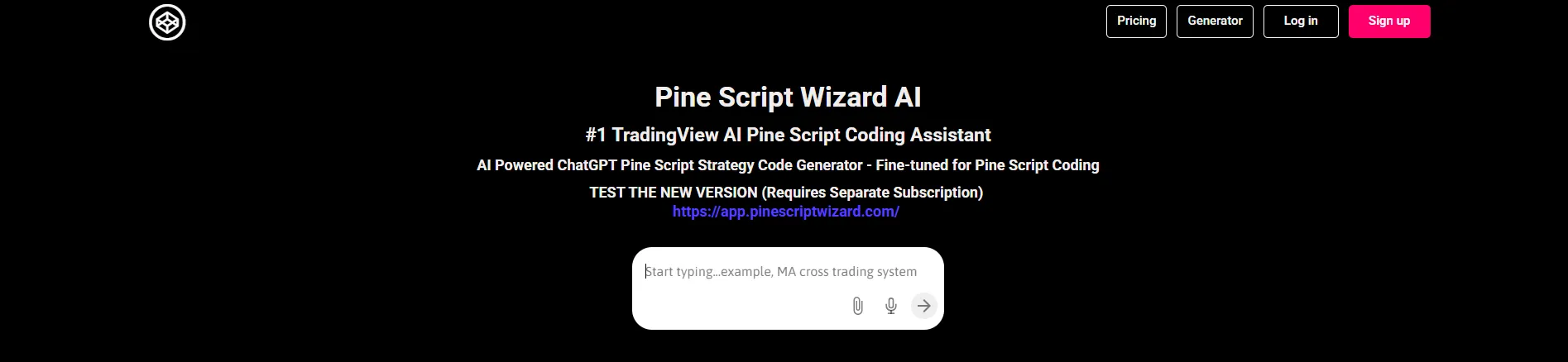

Pine Script Wizard AI

Pine Script Wizard AI is an innovative AI-powered Pine Script generator that empowers traders and

Pine Script Wizard AI is an innovative AI-powered Pine Script generator that empowers traders and developers to create custom trading strategies and indicators for the TradingView platform using natural language. It solves the problem of complex and time-consuming manual coding of Pine Script, a

Beatoven.ai

Beatoven.ai: The Leading AI Background Music Generator for Content Creators Introduction to Beatoven

Beatoven.ai: The Leading AI Background Music Generator for Content Creators Introduction to Beatoven.ai Beatoven.ai stands as a premier AI-powered music generation platform specifically designed for content creators, filmmakers, game developers, and digital marketers. With over 2 million creators us

Mubert

Mubert represents a revolutionary AI music generation platform that democratizes music creation for

Mubert represents a revolutionary AI music generation platform that democratizes music creation for content creators, streamers, filmmakers, and musicians worldwide. By leveraging sophisticated artificial intelligence and machine learning technologies, Mubert generates original, royalty-free music c

Yomu AI

Yomu.ai is an AI-powered writing assistant built specifically for academic writing, essays, and re

Yomu.ai is an AI-powered writing assistant built specifically for academic writing, essays, and research-based content . It helps students, researchers, and professionals structure ideas, write faster, and improve clarity while maintaining an academic and formal tone. Yomu.ai focuses on long-for

Math Solver Microsoft

Microsoft Math Solver is an AI-powered educational tool designed to help students solve math probl

Microsoft Math Solver is an AI-powered educational tool designed to help students solve math problems instantly and learn concepts step-by-step. It uses artificial intelligence to analyze equations, provide detailed explanations, and generate interactive graphs, making math learning easier and mo

Moltbot- Personal AI Assistant

Moltbot is a personal AI assistant designed to help users manage tasks, automate workflows, and bo

Moltbot is a personal AI assistant designed to help users manage tasks, automate workflows, and boost productivity using artificial intelligence. It acts like a digital helper that can assist with scheduling, reminders, note-taking, communication, and everyday work tasks — all through natural lan

Kimi K2

Kimi K2 for AI Agents refers to the use of Kimi K2’s AI-powered presentation and visual content ge

Kimi K2 for AI Agents refers to the use of Kimi K2’s AI-powered presentation and visual content generation capabilities in concert with autonomous AI agents to automate the creation of slides, reports, dashboards, and visual outputs as part of an AI-driven workflow. Instead of manual slide desi

OpenAI Codex

OpenAI Codex is an advanced artificial intelligence model designed to understand natural language an

OpenAI Codex is an advanced artificial intelligence model designed to understand natural language and generate computer code across multiple programming languages. It helps developers write, edit, debug, and explain code using simple instructions, making software development faster and more accessib

Arcads AI

Arcads AI is the premier AI-powered platform for creating professional User-Generated Content (UGC)

Arcads AI is the premier AI-powered platform for creating professional User-Generated Content (UGC) style advertising videos that convert. As a cutting-edge generative AI solution specifically designed for marketing teams, Arcads AI enables brands to produce high-quality, authentic-looking video adv