Microsoft, Google, Amazon say Anthropic Claude remains available to non-defense customers

The clarification came after reports that **Anthropic had been placed on a U.S. Department of Defense (DoD) supply-chain list**, raising questions about whether the company’s AI technology might face broader commercial restrictions.

Major technology companies Microsoft, Google, and Amazon have confirmed that Anthropic’s Claude AI models remain available to non-defense customers, despite recent controversy surrounding the company’s classification within U.S. defense supply chains.

The clarification came after reports that Anthropic had been placed on a U.S. Department of Defense (DoD) supply-chain list, raising questions about whether the company’s AI technology might face broader commercial restrictions.

However, cloud providers hosting Anthropic’s models say that businesses, developers, and research organizations can continue using Claude normally through their respective platforms.

The announcement reassures thousands of organizations that rely on Claude for AI development, automation, and enterprise applications.

What Is Anthropic’s Claude AI

Claude is a family of advanced large language models (LLMs) developed by the AI research company Anthropic.

The models are designed for tasks such as:

- Writing and summarization

- Coding assistance

- Data analysis

- Customer support automation

- AI-powered research tools

Claude has become a major competitor to other AI systems like OpenAI’s GPT models and Google’s Gemini, especially among enterprise users seeking strong safety and alignment features.

Many companies integrate Claude through cloud platforms rather than running the model themselves.

Why Microsoft, Google, and Amazon Spoke Out

Because Anthropic distributes its models through major cloud providers, any potential restriction could affect a wide ecosystem of developers.

Claude is currently accessible through:

- Amazon Web Services (AWS)

- Google Cloud’s Vertex AI platform

- Microsoft cloud integrations and partner ecosystems

Following speculation about the defense supply-chain designation, the companies clarified that commercial access to Claude remains unchanged for non-military customers.

This reassurance was important because thousands of startups, enterprises, and researchers depend on these platforms to build AI-powered products.

Understanding the Defense Supply-Chain Issue

The controversy stems from the U.S. Department of Defense supply-chain classification, which sometimes includes technology companies whose products are relevant to national security.

Being listed does not necessarily mean a company is restricted from commercial operations.

However, it can raise concerns about:

- Government oversight

- Export controls

- Potential security restrictions

Anthropic has reportedly challenged aspects of this designation, arguing that it could create confusion about the availability of its products for civilian use.

How Claude Is Used by Businesses

Claude is widely used across industries to power AI applications.

Common business use cases include:

- Customer service chatbots

- Automated document analysis

- Software development assistance

- Research and content generation

- Enterprise knowledge management systems

Because the models are accessible through API integrations on cloud platforms, companies can easily incorporate Claude into their internal tools and workflows.

Maintaining uninterrupted access is therefore critical for many AI-driven businesses.

Competition in the AI Cloud Ecosystem

The clarification from Microsoft, Google, and Amazon also highlights the intense competition in the AI cloud infrastructure market.

Each provider is racing to offer customers access to multiple advanced AI models.

Examples include:

- OpenAI models through Microsoft Azure

- Gemini models through Google Cloud

- Anthropic Claude through Amazon Web Services

Providing a variety of AI models allows companies to choose the best system for their specific needs.

Maintaining Claude’s availability helps ensure that the AI ecosystem remains competitive and flexible.

The Broader Debate Around AI and National Security

As artificial intelligence becomes more powerful, governments are increasingly viewing it as a strategic technology with national security implications.

This has led to growing discussions about:

- AI export controls

- Defense partnerships with tech companies

- Regulation of advanced AI systems

- Security risks from AI misuse

Balancing innovation with security has become one of the biggest policy challenges facing the global AI industry.

Companies like Anthropic are navigating this environment while continuing to expand their commercial AI services.

Final Thoughts

The confirmation from Microsoft, Google, and Amazon that Anthropic’s Claude remains available to non-defense customers provides reassurance to the broader AI ecosystem.

Despite ongoing debates about AI regulation and national security, the technology continues to expand rapidly across industries.

For developers and enterprises building AI applications, maintaining access to multiple leading models—including Claude—ensures innovation, competition, and flexibility in the evolving AI landscape.

FAQ

What is Anthropic’s Claude?

Claude is a family of advanced AI language models developed by the company Anthropic for tasks such as writing, coding, and data analysis.

Why was Claude’s availability questioned?

Reports about Anthropic being included in a U.S. defense supply-chain classification raised concerns about potential restrictions.

Can businesses still use Claude?

Yes. Microsoft, Google, and Amazon have confirmed that Claude remains available to non-defense customers.

Where can developers access Claude?

Claude is accessible through cloud platforms such as Amazon Web Services and Google Cloud.

Why is Claude important in the AI market?

Claude is one of the leading large language models and competes with systems like OpenAI’s GPT and Google’s Gemini.

Does this affect AI regulation?

The situation reflects a broader discussion about how governments should regulate powerful AI technologies while supporting innovation.

Mentioned in this article

Tools

Blink - Ai App Builder

Blink is an AI-powered app builder platform that allows users to create mobile and web application

Blink is an AI-powered app builder platform that allows users to create mobile and web applications quickly without coding . By leveraging artificial intelligence, Blink simplifies app design, logic generation, backend integration, and deployment — enabling entrepreneurs, businesses, and non-techn

Kimi K2

Kimi K2 for AI Agents refers to the use of Kimi K2’s AI-powered presentation and visual content ge

Kimi K2 for AI Agents refers to the use of Kimi K2’s AI-powered presentation and visual content generation capabilities in concert with autonomous AI agents to automate the creation of slides, reports, dashboards, and visual outputs as part of an AI-driven workflow. Instead of manual slide desi

NovelCraft ai

NovelCraft is an AI-powered writing platform designed to help authors, storytellers, and content cre

NovelCraft is an AI-powered writing platform designed to help authors, storytellers, and content creators produce long-form content such as novels, stories, scripts, and ebooks. The platform combines artificial intelligence with structured writing tools to assist users in planning, drafting, editing

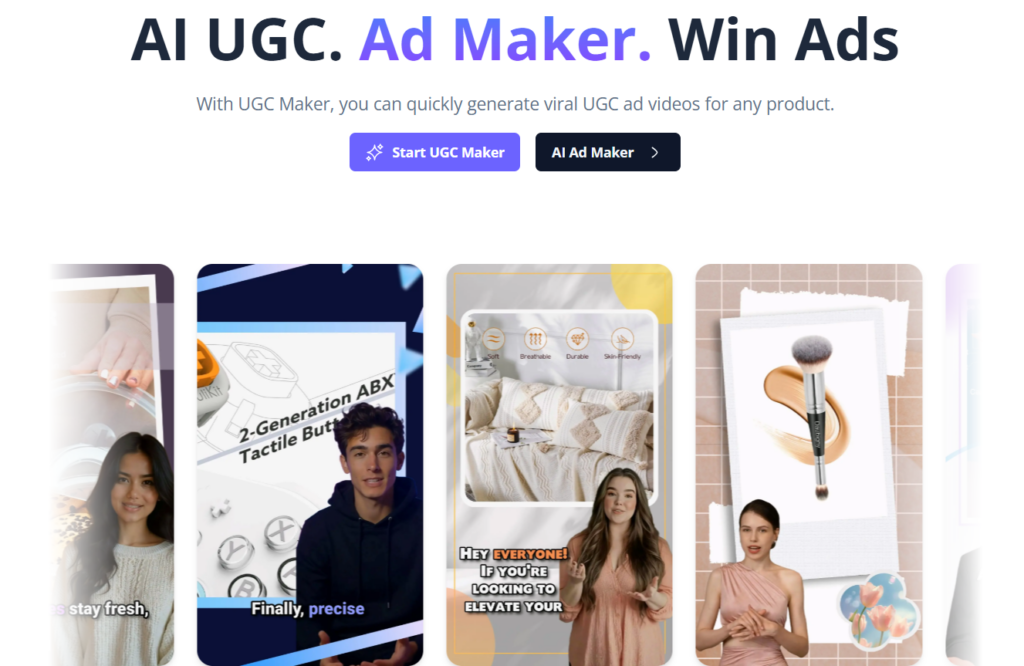

AI UGC Video Gen

AI UGC Video Gen is an AI-powered video creation platform designed to generate realistic user-genera

AI UGC Video Gen is an AI-powered video creation platform designed to generate realistic user-generated content (UGC) style videos for marketing, advertising, and social media. It allows businesses, creators, and marketers to produce engaging promotional videos using artificial intelligence without

Lipsync AI

(Product Features) Audio-driven lip-sync video generator (online) Upload video + audio to generate a

(Product Features) Audio-driven lip-sync video generator (online) Upload video + audio to generate a talking video with lip movements synced to the voice. Two main workflows Image + Audio → Talking portrait Video + Audio → Redub existing video Long Mode (longer outputs) Supports videos up to 5 minut