OpenAI disbands mission alignment team

The move has sparked widespread debate among AI researchers, policymakers, and industry observers

OpenAI has reportedly disbanded its dedicated mission alignment (or superalignment) team, signaling a major internal restructuring of how the company approaches long-term AI safety, governance, and model behavior research. Instead of operating as a standalone unit, safety and alignment responsibilities are being distributed across product, infrastructure, and research teams.

The move has sparked widespread debate among AI researchers, policymakers, and industry observers — especially at a time when AI systems are becoming increasingly powerful and widely deployed across business, education, and consumer applications.

Background: What Was the Mission Alignment Team?

The mission alignment team — often referred to as the superalignment group — was formed to address one of the most critical challenges in artificial intelligence: ensuring that advanced AI systems behave in ways that align with human values and intentions.

Its responsibilities included:

- Studying long-term risks from highly capable AI systems

- Developing techniques to monitor and evaluate AI outputs

- Researching methods to control advanced AI behavior

- Designing safeguards against unintended model actions

- Preparing safety frameworks for future artificial general intelligence (AGI)

The group was considered one of the industry’s most ambitious efforts to anticipate the long-term consequences of increasingly autonomous AI systems.

Why OpenAI Is Restructuring AI Safety Work

Reports suggest OpenAI is shifting from a centralized safety organization to a distributed safety model, embedding alignment practices into multiple teams rather than maintaining a single dedicated research group.

Possible reasons behind the move include:

- Accelerating AI product development cycles

- Integrating safety directly into engineering workflows

- Encouraging cross-functional collaboration between safety researchers and product teams

- Reducing organizational silos between research and deployment groups

Supporters of the change argue that safety becomes more practical when it is integrated into every stage of development instead of isolated within a single department.

Leadership Changes and Research Departures

The restructuring comes amid broader leadership transitions within OpenAI’s research divisions. Several high-profile researchers associated with long-term alignment efforts have reportedly departed or moved into different roles.

These changes have led to speculation about how OpenAI prioritizes long-term safety research compared with short-term commercial innovation.

Some industry analysts believe talent shifts are part of the natural evolution of rapidly scaling AI organizations, while critics worry that long-term risk research could lose institutional focus.

Industry Reaction: Safety vs Speed Debate

The news has reignited a long-standing debate in the AI community:

Supporters Say:

- Integrating safety into all teams increases accountability

- Alignment research should be practical and embedded in real products

- Faster development is necessary to stay competitive in a rapidly evolving industry

Critics Argue:

- Dedicated safety teams provide deeper long-term research focus

- Commercial pressures may overshadow alignment work

- Centralized oversight is necessary for powerful AI systems

AI governance experts note that as models become more capable, organizational structures around safety will have increasing global implications.

What This Means for AI Development

The restructuring could reshape how AI safety research is conducted across the industry:

- Safety could become more integrated into everyday development

- Research might focus more on practical deployment challenges

- Long-term existential risk studies may shift toward external institutions

- Competitive pressures between AI companies could influence safety investments

As OpenAI continues expanding its AI products and enterprise partnerships, embedding alignment into product pipelines may change how new models are tested, released, and monitored.

Regulatory and Policy Implications

Governments worldwide are closely watching how major AI companies manage safety responsibilities. Regulatory bodies may scrutinize whether decentralized safety models provide sufficient oversight.

Potential impacts include:

- Increased calls for external AI auditing

- Greater government involvement in AI safety standards

- New transparency requirements for model training and evaluation

- Expansion of international AI governance frameworks

As AI becomes more deeply integrated into critical infrastructure and decision-making systems, internal organizational choices could influence global regulatory policies.

Competitive Landscape

Other major AI companies are also experimenting with how to structure safety research:

- Some maintain centralized ethics and alignment teams

- Others embed safety researchers directly within engineering groups

- Hybrid models combining independent oversight with integrated development are becoming more common

OpenAI’s restructuring may influence how startups and large tech firms approach AI governance moving forward.

Final Thoughts

OpenAI’s decision to disband or restructure its mission alignment team reflects a broader transformation in the artificial intelligence industry — balancing rapid innovation with responsible deployment.

Whether decentralizing alignment efforts leads to stronger real-world safety or reduces long-term oversight remains an open question. As AI systems continue advancing toward greater autonomy and complexity, how companies structure their safety programs will play a critical role in shaping the technology’s future impact on society.

Frequently Asked Questions (FAQ)

What was OpenAI’s mission alignment team?

It was a research group focused on ensuring advanced AI systems behave safely and align with human values and intentions.

Has OpenAI stopped working on AI safety?

No. Safety work is reportedly being integrated across multiple research and product teams instead of remaining centralized.

Why did OpenAI restructure the team?

The company aims to embed safety directly into product development workflows and reduce organizational silos.

Are researchers leaving OpenAI?

Some leadership and research changes have been reported, though this is common during large organizational transitions.

Does this make AI less safe?

Experts are divided — some believe integration improves real-world safety, while others worry about reduced long-term oversight.

How might regulators respond?

Governments may increase oversight, transparency requirements, and safety standards as AI systems become more powerful.

Mentioned in this article

Tools

ChatGPT Atlas

ChatGPT Atlas is a next-generation AI-powered intelligence platform designed to map, organize, an

ChatGPT Atlas is a next-generation AI-powered intelligence platform designed to map, organize, and generate knowledge at scale. Built on advanced natural language processing (NLP) and large language models , ChatGPT Atlas helps users research faster, create high-quality content, analyze comple

Phonely AI

Phonely AI revolutionizes customer communication with its advanced AI-powered answering services tai

Phonely AI revolutionizes customer communication with its advanced AI-powered answering services tailored for both support and sales calls. This innovative platform leverages sophisticated voice AI technology to provide seamless, intelligent, and efficient interactions, ensuring that every customer

Solace Vision

Solace Vision is an innovative AI-powered text-to-3D creation tool that enables users to generate

Solace Vision is an innovative AI-powered text-to-3D creation tool that enables users to generate detailed 3D models and scenes from simple text prompts. It addresses the challenge of complex and time-consuming 3D modeling by leveraging artificial intelligence to translate natural language into t

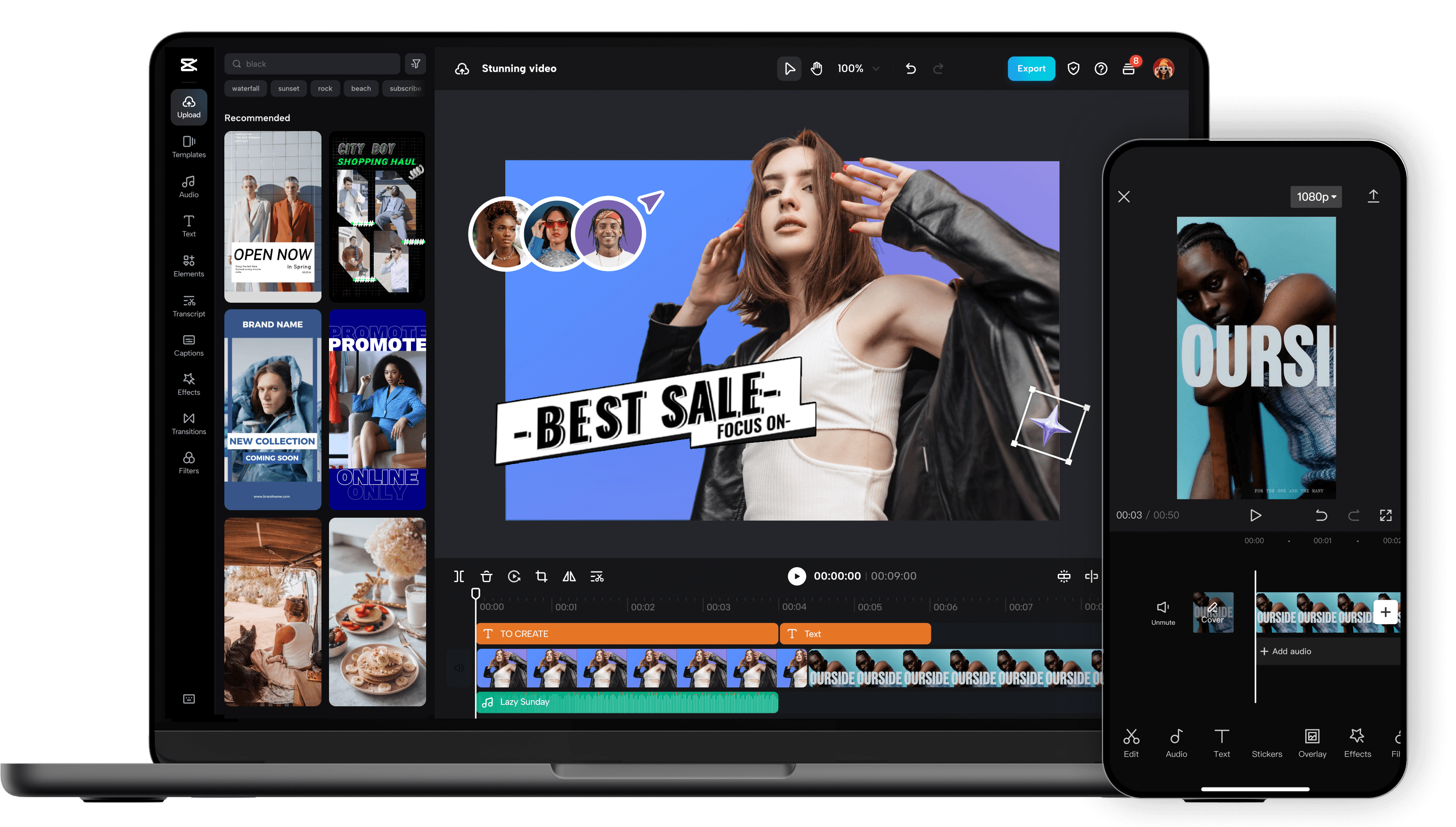

CapCut AI Video Editor

Unleash your creativity and transform your videos with CapCut AI Video Editor, the ultimate all-in-o

Unleash your creativity and transform your videos with CapCut AI Video Editor, the ultimate all-in-one mobile editing suite designed for creators, influencers, and anyone looking to make their content shine. Whether you're a seasoned pro or just starting out, CapCut's intuitive interface and powerfu

Chrome Webstore

The Chrome Web Store is Google’s official marketplace for browser extensions and themes created fo

The Chrome Web Store is Google’s official marketplace for browser extensions and themes created for the Google Chrome browser. It serves as a central hub where users can discover, install, manage, and update tools that extend Chrome’s functionality and personalize the browsing experience. As of 20

Adobe Podcast

Adobe Podcast is your all-in-one AI-powered solution for creating professional-quality audio content

Adobe Podcast is your all-in-one AI-powered solution for creating professional-quality audio content, whether you're a seasoned podcaster, a budding content creator, or a business looking to enhance your audio presence. Gone are the days of struggling with complex audio editing software and expensiv

Fathom AI Notetaker

Fathom AI Notetaker is an AI-powered meeting assistant that automatically records, transcribes, and

Fathom AI Notetaker is an AI-powered meeting assistant that automatically records, transcribes, and summarizes online meetings, eliminating the need for manual note-taking. This tool addresses the common problem of inefficient meeting follow-ups and lost information by leveraging artificial intelli

Elicit: AI for scientific research

Unlock the future of scientific discovery with Elicit, the revolutionary AI-powered research assista

Unlock the future of scientific discovery with Elicit, the revolutionary AI-powered research assistant designed to accelerate your workflow and deepen your understanding. Tired of endless hours sifting through mountains of academic papers, struggling to synthesize complex information, and facing wri

Clockwise: AI Powered Time Management Calendar

Unlock Peak Productivity with Clockwise: The AI-Powered Time Management Calendar That Works for You

Unlock Peak Productivity with Clockwise: The AI-Powered Time Management Calendar That Works for You Tired of back-to-back meetings eating into your focus time? Struggling to find blocks for deep work, personal appointments, or even just a breather? Introducing Clockwise, the revolutionary AI-powered

Runway | Building AI to Simulate the World

Runway is at the forefront of a revolutionary movement, harnessing the power of Artificial Intellige

Runway is at the forefront of a revolutionary movement, harnessing the power of Artificial Intelligence to build sophisticated simulations of the world around us. Imagine a digital twin of reality, a dynamic, interactive environment where complex phenomena can be explored, understood, and even manip

Meta Ai

Meta AI is an advanced artificial intelligence assistant and generative AI platform developed to

Meta AI is an advanced artificial intelligence assistant and generative AI platform developed to enhance communication, creativity, and productivity across Meta’s ecosystem. Powered by large language models, machine learning, and multimodal AI , Meta AI helps users generate content, answer ques