Who Controls What AI Tells You? Former Meta News Chief Campbell Brown Weighs In

In an era where AI answers homework questions, investment advice, and election breakdowns with the same confident tone—regardless of accurac

Campbell Brown, the former CNN anchor turned Meta news chief, is back in the spotlight—this time not managing Facebook's news partnerships, but launching a crusade against AI's unchecked "truth machine" status. Leading Forum AI, she's assembling an elite panel of experts to benchmark AI models on everything from geopolitics to mental health advice, exposing biases, hallucinations, and the shadowy human hands shaping what ChatGPT, Gemini, and Claude tell us daily. Her mission? Turn AI from a black box into an accountable information system before it becomes our default oracle for facts, decisions, and worldviews.

In an era where AI answers homework questions, investment advice, and election breakdowns with the same confident tone—regardless of accuracy—Brown's work strikes at the heart of trust in the post-ChatGPT world. For content creators like you in Delhi building SEO-optimized AI news pipelines, this is gold: emerging standards for AI reliability, expert-vetted benchmarks, and governance trends ripe for long-form coverage that ranks high on "AI bias 2026" searches.

Campbell Brown's Journey: From CNN Anchor to AI Truth Cop

Campbell Brown's resume reads like a media power playbook: prime-time anchor at CNN during the Iraq War era, morning show host at NBC, then the unlikely pivot to Silicon Valley as Meta's first Global Head of News Partnerships from 2017-2023. She brokered deals with publishers during Facebook's fact-checking wars, navigated the 2020 election misinformation minefield, and watched social platforms prioritize viral engagement over journalistic rigor.

When she left Meta in late 2023 amid the company's pivot away from news, Brown didn't fade into consulting obscurity. Instead, she founded Forum AI in 2025—a startup laser-focused on one question: Who decides what AI tells you? Her answer: Not just engineers and their training data, but a rigorous, independent evaluation layer built by domain experts who can spot when GPT-4o confidently cites a Reddit thread as "peer-reviewed research" or injects left-leaning framing into neutral queries.

Brown's wake-up call came from watching her own kids treat AI chatbots as infallible tutors. "Silicon Valley debates model parameters while consumers use these things as gospel," she said in recent interviews. Forum AI recruits heavyweights like historian Niall Ferguson, CNN's Fareed Zakaria, former government officials, and academic specialists to create "golden standard" benchmarks—test sets that probe AI on nuanced, high-stakes topics where simple math or coding evals fall short.

How Forum AI Actually Works: Expert Benchmarks Meet AI Judges

Forum AI's core innovation blends human expertise with scalable AI evaluation, creating a hybrid system that catches what pure automation misses:

-

Expert-Curated Test Suites: Panels craft questions on geopolitics (e.g., "Explain Taiwan Strait tensions"), mental health ("Best CBT techniques for anxiety"), finance ("Value investing vs. momentum 2026"), and hiring ("Screening AI engineers post-AGI hype"). Each gets gold-standard answers with reasoning chains.

-

AI Judges Trained on Experts: Smaller models fine-tuned to mimic human evaluators, achieving ~90% agreement on scoring for tone, factual accuracy, bias, and completeness. This scales evaluation without bankrupting the process.

-

Multi-Dimensional Scoring: Beyond "right/wrong," Forum measures:

- Source Quality: Does it cite primary studies or aggregator sites?

- Bias Signals: Left/right lean, overconfidence, omitted perspectives?

- Hallucination Rate: Fabricated stats, events, or citations?

- Context Nuance: Handles ambiguity, cultural framing, latest developments?

Early results? Shocking. Leading models like Gemini and Claude "lean left" on social issues, hallucinate ~15-20% on breaking news, and source from dubious corners like CCP-linked sites or low-Elo Reddit posts. Forum's public leaderboards pressure companies: Low scores on geopolitics benchmarks = trust erosion = user churn.

Brown's bet: Market forces will drive adoption. Just as UL certifications made appliances safe, Forum AI scores could become the "trust seal" AI products chase. For your content strategy, this means evergreen SEO angles: "Forum AI vs. ChatGPT benchmarks," "Best unbiased AI models 2026," "Campbell Brown exposes AI flaws."

The AI Trust Crisis: Social Media's Ghost Haunts Foundation Models

Brown sees eerie parallels between today's AI boom and social media's 2010s trajectory:

| Social Media Era | AI Era Equivalent |

|---|---|

| News Feed algorithms chased clicks | RLHF fine-tuning rewards "helpful, engaging" over accurate |

| Viral misinformation spread unchecked | Confident hallucinations go viral in shares/screenshots |

| Platforms blamed users/publishers | AI companies blame "training data" for biases |

| Fact-checkers as band-aids | Human evals as afterthoughts in dev cycles |

| Trust collapsed → regulation | Trust gaps → enterprise hesitancy, consumer skepticism |

The stakes? Higher. Social media distorted discourse; AI distorts reality. Users don't fact-check Bard's election takes or Grok's medical advice—they copy-paste into decisions. Brown's fix demands source transparency (show training citations), eval independence (no self-scoring), and domain-specific benchmarks (one size doesn't fit news+code+therapy).

Her critique stings Silicon Valley: Foundation models excel at LeetCode but flop where humans shine—context, skepticism, multi-perspective synthesis. "AI sounds smart reciting stats from dubious blogs," she notes, "but ask about Ukraine peace terms, and it parrots yesterday's headlines without tradeoffs."

SEO and Content Goldmine: Why AI Governance Beats Hype Cycles

For your Delhi-based AI/tech blogging workflow, Campbell Brown's Forum AI unlocks high-ROI content plays across 2026-2027:

Immediate SEO Targets

Content Cluster Strategy

- Pillar: AI Evaluation Platforms – 5000-word roundup of Forum, Scale AI, Anthropic evals

- Clusters: Model rankings, bias case studies, expert interviews

- Lead Gen: "Download Forum AI's latest geopolitics benchmark PDF"

Monetization Angles

- Affiliates: AI tools with Forum integration (watch for 2026 launches)

- Sponsored: Enterprise AI vendors chasing "Forum AI certified" badges

- Consulting Leads: "AI content audit using expert benchmarks"

Brown's timing is perfect: As OpenAI/Grok/Anthropic race to AGI, governance lags. Your pipeline can own "AI truth" before competitors pivot from tools to oversight.

Real-World Impact: From Homework to High Finance

Consider the ripple effects Forum AI exposes:

- Students: AI tutors confidently wrong on history timelines, sourcing TikTok "facts."

- Investors: Momentum stock picks ignoring 2026 rate cycles.

- HR: Biased interview questions amplifying resume gaps unfairly.

- News Junkies: Polarized takes on Israel-Gaza, missing diplomatic nuances.

Brown's panels—think ex-CIA analysts on intel, therapists on CBT—provide ground truth AI can't self-generate. Early pilots show 25-40% accuracy gaps on "hard" topics, validating her skepticism.

FAQ: Campbell Brown and Forum AI Explained

Who is Campbell Brown exactly?

Former CNN/NBC anchor (2008-2010), then Meta's news partnerships head (2017-2023). She shaped Facebook's fact-checking amid 2020 election wars, left as news deprioritized, now fights AI misinformation.

What makes Forum AI different from Hugging Face leaderboards?

Domain experts + AI judges on high-stakes topics (not just MMLU trivia). Scores bias, source quality, nuance—not raw compute.

Which AI models perform worst per Forum?

Early leaks suggest Gemini left-leaning on DEI, Claude overconfident on news, GPT family best at sourcing but weak on geopolitics context.

Will AI companies pay for Forum evals?

Brown predicts yes: Low trust = dead products. Enterprises demand "audit-grade" AI; consumers want seals like "Forum Verified."

How can content creators use this?

- Cover benchmarks weekly for SEO juice

- Build tools querying Forum APIs (if launched)

- Pitch "AI governance" to AdSense alternatives

The Bigger Picture: AI as Journalism's Next Frontier

Campbell Brown's Forum AI isn't anti-AI—it's pro-accountability. By forcing transparency on sources, evals, and biases, she bridges journalism's rigor with AI's scale. For creators like you optimizing Delhi tech blogs, it's a blueprint: Hype cycles fade, but trust stories compound.

Track her expert roster announcements, model rankings drops, and enterprise adoption. In a world questioning "Is my AI lying?", content answering who watches the watchers captures the trust premium—and the leads.

Mentioned in this article

Tools

Haiper Ai

Haiper Ai is an innovative AI video generation platform that transforms text prompts and ideas in

Haiper Ai is an innovative AI video generation platform that transforms text prompts and ideas into fully-produced videos, streamlining content creation for diverse applications. It addresses the challenges of traditional video production ΓÇô high costs, time constraints, and the need for special

Notion AI

Notion AI is an integrated AI productivity tool designed to enhance user workflows within the Not

Notion AI is an integrated AI productivity tool designed to enhance user workflows within the Notion workspace, enabling users to streamline writing, automate tasks, and unlock creative potential . Notion AI addresses the challenges of information overload and repetitive tasks that often hinder

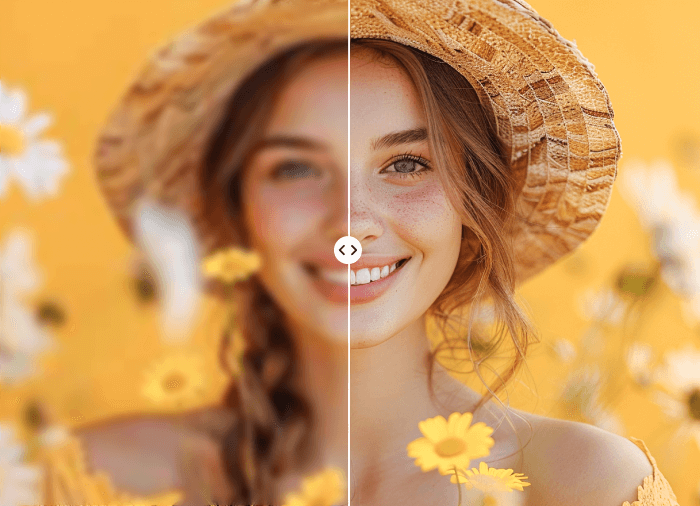

Image enhancer Ai

Image Enhancer Ai is an AI-powered image enhancement tool designed to help users improve the qua

Image Enhancer Ai is an AI-powered image enhancement tool designed to help users improve the quality of their images quickly and easily by leveraging artificial intelligence and deep learning algorithms . This tool addresses the common problem of low-resolution, blurry, or poorly lit images th

Free LinkedIn Profile Analyzer

The LinkedIn Profile Analyzer is an AI-powered tool aimed at optimizing and improving LinkedIn profi

The LinkedIn Profile Analyzer is an AI-powered tool aimed at optimizing and improving LinkedIn profile's professional presentation. Understanding how a profile is perceived, the Analyzer provides clear, actionable feedback to enhance your professional presence. The analysis on offer includes a deep

AI Image Enhancer

AI Image Enhancer is a comprehensive AI tool designed to improve and upscale the quality of images t

AI Image Enhancer is a comprehensive AI tool designed to improve and upscale the quality of images to a higher resolution. The tool offers a variety of advanced features to amplify and enhance image quality, providing sharp, defined and pristine results. Its AI-driven solutions provide super-sized e

Deep Image

Deep Image is an AI-powered image enhancement platform designed to help users improve the visual

Deep Image is an AI-powered image enhancement platform designed to help users improve the visual quality of their photos and generate new images through the application of advanced artificial intelligence algorithms. This tool addresses the common problem of low-resolution, noisy, or otherwis

iPage AI

iPage AI is a versatile AI image generator that empowers users to create unique and compelling vi

iPage AI is a versatile AI image generator that empowers users to create unique and compelling visuals from text prompts. It addresses the challenge of sourcing high-quality images for various applications, eliminating the need for expensive stock photos or complex design software. Utilizing adva